上次在 InfoQ 中看到一篇文章討論測試自動化, 其中讓我印象最深刻的是有關測試工具.

在十年前, 測試工具大概由三家公司所佔據, 公司名稱已經不太清楚了, 目前大概只剩 QTP 活下來. 那時候第一名的市佔率, 大約是第二名的兩倍. 開源的測試工具那時候還不成氣候.

曾幾何時, 世界變了, 從 Google Trend 發現到, 當年市佔率約 30% 的 QTP, 衰敗到一整個不行, 開源的測試工具現在已經是席捲大地.

http://www.google.com.tw/trends/explore#q=qtp%2C%20%2Fm%2F025sf8g%2C%20Robot%20Framework&cmpt=q

為什麼會這樣呢? 我猜原因可能如下

1. 品質太差

其實寫這些工具的公司, 對於自己本身的開發品質也沒有好到哪裡, 因此所產出出來的產品也是 bug 連連, 每次都被我們恥笑, 是否有用自己的工具, 測試過自己的產品.

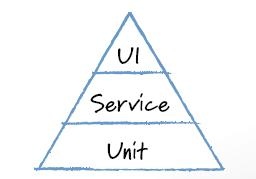

2. GUI 自動化其實是最沒用的

正如 Mike Cohn 所說的, 如果要做自動化的話, GUI 的自動化是最不建議的. 常常畫面有很多 object 它們無法辨識, 此外若是解析度一變, 或者測試機器 CPU 速度差很多, 這些錄出來 script 常常執行失敗. 時間一久, 你可能就不太會想投資他們.

3. 無法和開發流程搭配

工具通常會需要搭配開發流程, 可是這些工具廠商並沒有自己所謂的使用心法, 純粹只是畫面的錄製與播放. 像 Selenium 或是 JUnit 這些工具, 背後都有 ATDD 和 TDD 等理論支撐. 沒有劍法或是內功輔助, 神兵利器在手也是沒用.

4. 價格太貴

以前這種商業測試軟體, 價格大多是百萬起跳, 這不是大多數公司可以買得起的. 最慘的事, 是買完後還無法確定有用. 以前我們公司, 曾經把一堆公司叫過來來比賽, 只要能夠真的可以測試我們的產品, 我們就買的. 結果只有一家通過. 所以貴還不一定有用, 你還會想買嗎?

所以在 agile 出現後, 這個局面真的被顛覆了, 免錢品質又好的一堆, 所以正如安真說的: 我回不去了. 所以還不好好花時間研究這些免錢的工具 XDDD